OT Remote Access is a Trust-Boundary Problem.

When OT teams discuss remote access, they often begin with the wrong questions. The conversation quickly turns to MFA, session recording, PAM integration, centralised management, or whether the product claims to follow Zero Trust principles. These features may matter, but they do not explain what kind of remote access architecture is actually being introduced, or what trust boundary it creates between the outside world and the control environment.

I recently ran an exercise with students in my Master’s in rail cybersecurity class. I gave them material from several leading OT remote access solution vendors, including Claroty, Xona, Xage, and Waterfall’s HERA, and asked them to assess how these solutions might replace a traditional OT jumphost architecture. The exercise was revealing. These were capable engineers, yet like many industrial cybersecurity professionals, they were being pulled towards market language and product presentations rather than the underlying architectural differences clearly.

That is the point of this article: OT remote access should be assessed first in terms of operational need, architecture, and trust boundary, not product features.

Start with the task, not the tool

In OT, remote access is not simply a way for a user to reach a system from elsewhere. It is a design decision about what kind of influence is allowed to cross into an industrial environment. If that influence is not clearly understood, the rest of the security discussion becomes secondary. You can add approvals, logs, MFA, and policy controls to an architecture that still creates more consequence than the site realises.

Not all OT remote access addresses the same operational problem. Monitoring a remote process, troubleshooting a workstation, maintaining a historian, applying an occasional update, and giving a vendor emergency access to a control asset are different tasks. They do not require the same level of interaction, they do not justify the same boundary, and they should not automatically lead to the same design.

That is why OT remote access should be treated first as an architectural question. Before comparing products, it helps to separate the main patterns in use, understand how each one works, and then examine the cybersecurity implications of each.

In many projects, however, the requirement gets flattened into a generic need for secure remote access. Once that happens, the discussion drifts toward familiar IT questions:

- Is the user authenticated?

- Is the connection encrypted?

- Can the session be approved and recorded?

- Is there role-based access control?

- Is there integration with identity systems?

Those are useful control questions, but they come second. In OT, the more important question is what type of request is crossing the boundary in the first place. Is the remote user interacting with a screen? Sending protocol traffic? Acting on a single target or across part of the system?

From there, the architectural questions follow.

- Is there a trusted mediator inside OT?

- What role does the OT firewall play?

- Is there persistent connectivity from inside OT to an external service?

- Has a software component inside OT become part of the trust boundary?

Those details shape consequence far more than the dashboard features do.

What the trust boundary means in OT remote access

In remote access, the trust boundary is the point at which an external user, service, or system gains some ability to influence assets inside the OT environment. Different architectures place that boundary in different locations.

In a traditional design, the boundary may sit at a firewall and a remote desktop path to a jumphost. In a brokered design, it may shift into a software intermediary or a cloud-mediated service that maintains inside-out connectivity. In a hardware-enforced design, the architecture may go further by constraining what is allowed across the boundary at all.

From a cybersecurity perspective, the key questions are:

- What exactly crosses the boundary?

- What component must be trusted?

- What happens if that trusted component is compromised or misconfigured?

- Does the architecture reduce consequence, or mainly improve manageability?

These questions are more useful than asking whether the product is modern or legacy. Two solutions may both offer strong authentication and detailed auditability while creating very different residual risk.

Architectural patterns in OT remote access

Several architectural patterns recur in OT remote access. Vendors use different labels, but the underlying models are broadly consistent.

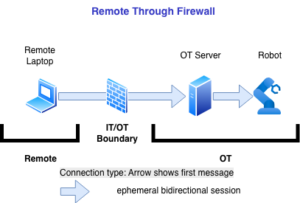

1. Firewall plus direct remote access path

This is the classic model. A firewall permits remote connectivity into a network segment, often to a server, engineering workstation, VPN concentrator, or management point. The user authenticates, a tunnel is established, and access to the destination is controlled through software enforced policy rules.

This design is inherited largely from IT remote access practice. It can be strengthened through segmentation, tightly scoped rules, jump restrictions, MFA, and reduced exposure management. In less mature environments, however, it often becomes an accumulation of exceptions. Over time, those exceptions can turn a remote access path into a broad conduit into OT.

The cybersecurity implication is straightforward: the architecture depends on software enforcement at the boundary and on policy remaining correct over time. If the tunnel, rules, credentials, or reachable services are broader than intended, an attacker’s room to manoeuvre increases. The model may be acceptable for some use cases, but it does not change the fact that bidirectional connectivity is being allowed across the boundary, with software policy carrying most of the burden.

2. Jumphost or bastion-host architecture

A second pattern places a jumphost between the remote user and the OT assets. The user first connects to a controlled system in a DMZ or intermediate zone, and from there reaches the intended OT systems using remote desktop, management tools, or protocol-specific utilities. In most deployments, two firewalls are used: one between the remote zone and the DMZ, and another between the DMZ and the OT zone.

This pattern improves on direct reachability by concentrating authentication, logging, approvals, and session control at a narrower point. It can improve monitoring and make third-party access easier to manage. For many organisations, it is the first pattern that brings real discipline to remote access.

The cybersecurity question, however, is not whether the jumphost is useful. It is what trust has now been concentrated in that host. Once the jumphost becomes the gateway into OT, it becomes part of the trust boundary. If it is compromised, misconfigured, overprivileged, or allowed to reach too much, it can become a pivot point into the industrial environment. A jumphost improves control, but it also concentrates consequence.

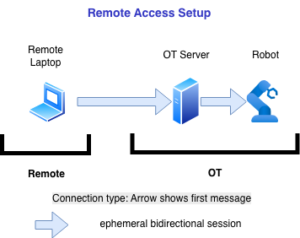

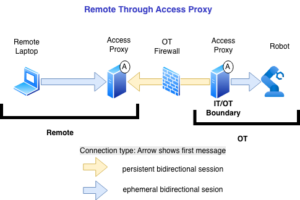

3. Brokered remote access architecture

This pattern is common in modern OT remote access products. Instead of opening inbound connectivity into OT, a software component inside the OT environment establishes outbound connectivity to an external service or broker. Remote users authenticate to that service, which then brokers or relays access to internal assets.

From an operational point of view, this approach is attractive. It removes some of the friction of inbound firewall changes, is easier to deploy across distributed environments, and often includes workflow features such as approvals, policy control, centralised inventory, session recording, and integration with identity systems.

Some features in this model are tailored to industrial use cases. One example is streamed sessions, where the remote user interacts with a screen image or rendered session rather than connecting more directly to OT protocols or systems. The user sees a workstation, HMI, or application session remotely, while the OT protocol activity remains local to the environment in which that session is running.

The architectural point, however, is that the trust boundary has not disappeared. It has moved. The boundary now includes the internal software component, the brokered communications model, and the service that mediates access. Administrative friction is reduced by placing more trust in an intermediary.

That does not make the model wrong. But it does mean the evaluation must be explicit. What protocols or actions can be relayed? Is the connectivity persistent? How much authority does the intermediary have? If the software component or broker is compromised, misconfigured, or trusted too broadly, what paths into OT now exist? These are the questions that matter more than whether the product interface looks cleaner than a VPN.

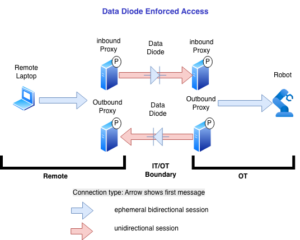

4. Hardware-enforced remote access or constrained-boundary architecture

There is also a different architectural class that begins with a narrower question: what should be allowed to cross the boundary at all, and how can the boundary be enforced in a way that does not depend entirely on software trust? In hardware-enforced models, the goal is not simply to manage a bidirectional session more carefully. It is to constrain the interaction structurally using unidirectional gateways or other hardware-based mechanisms to enforce directional flow.

Different products and architectures implement this in different ways. Some focus on remote viewing with constrained control paths. Others use on hardware-enforced directionality or physically mediated switching to separate functions that many software-based remote access systems combine into a single live session. One recent example is HERA by Waterfall Security Solutions, which uses hardware-based unidirectional data flow to enforce directional control.

From a cybersecurity perspective, this class should not be treated as just another variation of secure remote access. The design question is different. Rather than assuming a broad interactive session and then layering policy on top, these architectures begin by asking whether certain traffic, commands, or interaction patterns should be prevented from crossing the boundary in the first place. The trade-off is less convenience and sometimes less flexibility, but also a different failure model. That difference matters in high-consequence environments where the main concern is not administrative efficiency but limiting what a remote compromise can do.

Why these patterns should not be treated as interchangeable

A common mistake in OT remote access discussions is to place all of these models on a single maturity ladder, as though they were simply cleaner or newer versions of the same design. They are not.

A firewall-reliant design, a jumphost, a session broker, and a hardware-enforced architecture place trust in different locations and allow different kinds of influence to cross the boundary. They also fail in different ways. Some improve administration. Some improve visibility and accountability. Some reduce attack surface. Some shift trust into a different intermediary.

That is why product comparisons based only on feature checklists often mislead buyers. Session recording, approvals, browser-based access, vaulting, and identity integration may all be useful, but none of them shows where trust sits or what the residual consequence looks like if the trusted component is compromised, misconfigured, or simply given too much authority.

Cybersecurity implications that matter across all patterns

Once the architecture is clear, the security discussion improves. Regardless of the pattern, the most important questions tend to be these:

What crosses the boundary?

Not all remote access carries the same kind of influence across the trust boundary. Some architectures allow protocol traffic. Others allow keyboard and mouse interaction with a remote session. Others permit file transfer, credential use, command execution, or brokered application access. The type of influence matters because it determines what an attacker could do if the remote path is abused.

What component must be trusted?

Every architecture has a trusted component. It may be a firewall rule base, a VPN service, a jumphost, an agent inside OT, a cloud broker, a remote session server, a hardware control. That dependency should be made explicit. If a team cannot identify the trusted component clearly, it probably does not understand the architecture well enough.

Whether connectivity is persistent?

Persistent connectivity changes the exposure model. An always-available brokered path, a long-lived tunnel, or continuously connected intermediary may improve support and administration, but it also creates a standing relationship that must be defended continuously. In OT, persistence should be justified, not assumed.

What happens on failure?

This is one of the most important questions and one of the least discussed. If the intermediary is compromised, what can the attacker now do? If the session-control layer fails open, what remains protected? If the trusted software behaves incorrectly, is the result merely an administrative inconvenience, or does it create a path to manipulate the control environment? OT security decisions should be judged heavily by failure behaviour.

Whether the design reduces consequence or just reorganises it?

Some architectures reduce the consequences of compromise by limiting function, separating flows, or constraining authority. Others improve the manageability of a live remote access model. Both may be useful, but they are not the same achievement. In a high-consequence environment, that distinction matters.

A way to evaluate OT remote access

When evaluating an OT remote access design, I would start with a small set of questions:

- What operational task requires remote access?

- What exactly crosses the trust boundary?

- What intermediary, if any, must now be trusted?

- Is the connectivity persistent, time-limited, or enabled only on demand?

- If the intermediary is compromised, how much authority does the attacker gain?

- Does the architecture reduce the consequence of failure, or mainly improve workflow and administration?

- Can the team deploying it explain the trust model in plain language?

If the answer to the last question is no, the organisation is probably not evaluating architecture yet. It is evaluating product language.

Closing

OT remote access is not going away, nor should it. The operational need is real. Plants, fleets, substations, remote assets, and support organisations often depend on it. But the subject needs to be discussed more carefully than it usually is.

The first question should not be whether the product has enough security features. It should be what trust boundary the architecture creates and what kind of influence it allows to cross into the OT environment.

Once that is clear, the comparison becomes more honest. Some architectures will make sense for some tasks. Some will be acceptable only in lower-consequence environments. Some will provide stronger operational control while still preserving broad consequence if they fail. And some belong in a separate class because they are designed to reduce what can cross the boundary in the first place.

That is why OT remote access is best understood not as a generic access problem, but as a trust-boundary problem.

Need help evaluating your OT remote access architecture?

At OTIFYD, we work with organisations to map remote access designs to real operational risk, not vendor claims.

We help teams:

- Identify where their actual trust boundary sits (not where they assume it sits)

- Compare architectures based on consequence, not feature lists

- Redesign remote access to reduce blast radius in high-consequence environments

If your current model cannot be clearly explained in terms of what crosses the boundary and what happens if it fails, it may be time to take a second look.

By Dr Jesus Molina (April 2026)